How to Sync Sound and Video Like a Pro Editor

At its core, syncing sound and video is all about lining up a separately recorded audio track with its matching video clip. The old-school methods still work wonders: using a visual cue like a hand clap or a clapperboard creates a sharp, unmistakable spike on both your audio and video timelines, making it easy to align them. Of course, modern software can also do the heavy lifting by automatically matching the audio waveforms from both sources.

Why Perfect Audio Sync Is Non-Negotiable

Perfectly synced audio isn't just a technical checkbox—it's the invisible glue holding your entire video together. When the sound and picture are in perfect harmony, your audience gets lost in the story. But when they're off by even a few frames, the illusion shatters.

Think about the last time you saw a video where the dialogue didn't quite match the speaker's lips. It’s jarring, right? That tiny delay instantly screams "amateur" and can make viewers question your credibility. This guide will go beyond the simple "how-to" and dig into the "why," because understanding this is fundamental, whether you're editing your first vlog or a complex multi-camera shoot.

The Real Impact of Bad Sync

Out-of-sync audio creates a seriously jarring experience. It’s a form of cognitive dissonance—what we see clashes with what we hear—and it immediately makes your content feel unprofessional and untrustworthy. Viewers have a subconscious expectation of quality, and bad sync is one of the first things to break it.

Here’s what you're up against with poor sync:

- Vanishing Viewers: An audience is far more likely to click away from a video that just feels "off."

- Lost Credibility: It makes the entire production feel sloppy, which can undermine the authority of your message.

- A Distracted Audience: Instead of absorbing your content, viewers are stuck trying to figure out why the audio and video don't line up.

Setting Yourself Up for Success

Before we even touch any software, let's talk about the simple habits that make life in the editing suite so much easier. A quick hand clap or a classic clapperboard slate at the start of every take gives you a sharp, clear reference point—both visually and audibly—to line up your tracks.

This isn’t a new trick. Filmmakers have been doing this for nearly a century. The challenge of syncing sound to picture goes all the way back to the early 1900s, when live musicians tried to keep up with silent films. It wasn't until the "talkies" arrived in the late 1920s that true synchronization became an absolute necessity, completely changing how stories were told on screen.

Pro Tip: Even if you're just shooting with your phone, a single, sharp clap before you start talking can save you a massive headache later. It’s a tiny habit that pays off big time in the edit.

Nailing this basic principle is one of the most crucial video editing tips for beginners and a skill that seasoned pros rely on daily. This guide will give you the practical skills you need to tackle any syncing challenge and make sure your final video is polished, professional, and ready to impress.

Mastering Sync Techniques In Your NLE

Once your footage is off the camera and the audio is off the recorder, the real work begins inside your non-linear editor (NLE). This is where you'll merge those separate files into a single, seamless clip. Getting your sound and video in sync can be as simple as a single click, or it might require a more hands-on, manual approach.

The good news? Modern NLEs like Premiere Pro, Final Cut Pro, and DaVinci Resolve are incredibly powerful. They give you multiple ways to nail perfect sync, so you can pick the right method for any project.

The Classic Manual Sync Method

Before automated tools became the norm, editors relied on their eyes and ears. Manual syncing is a fundamental skill that’s still incredibly useful, especially when automated methods fail or you're working with footage that lacks a clear audio reference.

It’s a pretty straightforward process:

- First, drag your video clip (with its low-quality camera audio) and your high-quality external audio clip onto separate tracks in your timeline.

- Next, zoom way in on the audio tracks until you can clearly see the waveforms. This visual map of the sound is your guide.

- Look for the sharp, obvious spike created by your slate or a hand clap. You should see a similar peak on both the camera's scratch audio and the external audio.

- Now, just nudge the external audio clip until its spike lines up perfectly with the one from the camera audio. Hit play—if it sounds like a single, unified track with no echo, you've nailed it.

Once everything is aligned, you can mute or delete the scratch audio track, leaving just the crisp, clean sound from your external recorder. This method gives you total control and is a rock-solid fallback when technology lets you down.

Unleashing Automated Sync Tools

While knowing how to sync manually is essential, automated tools are a massive time-saver, especially on bigger projects. Most professional NLEs can analyze and match audio waveforms for you, getting the job done in seconds.

For example, in a program like Adobe Premiere Pro, you can synchronize clips based on their start point (the "In point"), their end point (the "Out point"), or even by matching audio waveforms. When you have multiple audio sources, you can even tell the software which track to use as the primary reference.

To really fly through your edits, you have to get comfortable with your NLE's video editing tools. Knowing the software inside and out is what separates the pros from the amateurs.

A Quick Tip for Multi-Camera Shoots: If you're syncing multiple camera angles to a single audio source, the "Create Multi-camera Source Sequence" feature is a game-changer. Just select all your clips in the project bin, right-click, and let the software build a synced sequence for you based on the audio. It's that easy.

To give you a clearer picture, here's a quick breakdown of the common sync methods.

Comparison of Audio Sync Methods

| Sync Method | Best For | Pros | Cons |

|---|---|---|---|

| Manual Waveform | When auto-sync fails, or for clips without clear reference audio. | Full creative control, works with any footage. | Time-consuming, requires a visible sync point. |

| Automated Waveform | Most common scenarios, especially multi-camera shoots. | Extremely fast and generally accurate. | Can fail with noisy backgrounds or distant audio. |

| Timecode | Professional productions, multi-camera shoots, long recordings. | Frame-accurate precision, incredibly fast. | Requires special equipment and on-set setup. |

| Markers | Custom sync points when there's no slate or clear audio spike. | Flexible, allows for creative sync points. | Requires manual placement of markers during review. |

Each method has its place, and knowing which one to use in a given situation is a key part of an efficient workflow.

Working With Timecode And Markers

On more professional sets, timecode is the gold standard. Think of it as a clock that’s embedded into every single video and audio file.

When all your cameras and sound recorders are jam-synced to the same master clock, every file shares the exact same time reference. Back in the NLE, you just select the clips and use the "Synchronize by Timecode" function. The software instantly snaps everything into place on the timeline, down to the exact frame. It’s the most precise method out there, but it does require planning and the right gear during production.

You can also use markers as custom sync points. If you drop a marker on your video and audio clips at the exact same moment—maybe at a camera flash or a specific action—you can tell your NLE to align them based on those markers.

Understanding these different techniques is key to editing efficiently. Whether you're cutting a quick social clip or a complex interview, having these skills in your back pocket means you can handle any syncing challenge that comes your way. And if you're focused on short-form content, check out our guide on effective editing for YouTube Shorts to see how these principles apply.

How to Diagnose and Fix Audio Drift

You’ve been there. You sync your clips perfectly at the start, feeling great about your edit, only to discover the audio is completely out of whack by the end of the timeline. It’s maddening.

This issue is called audio drift, and it's one of the most common headaches for editors. The audio gradually falls out of sync, turning a perfect start into a mess just minutes later.

The good news? It’s not random. Audio drift is almost always caused by a technical mismatch between your camera and your external audio recorder. Understanding what's going on under the hood is the key to fixing it—and stopping it from happening again.

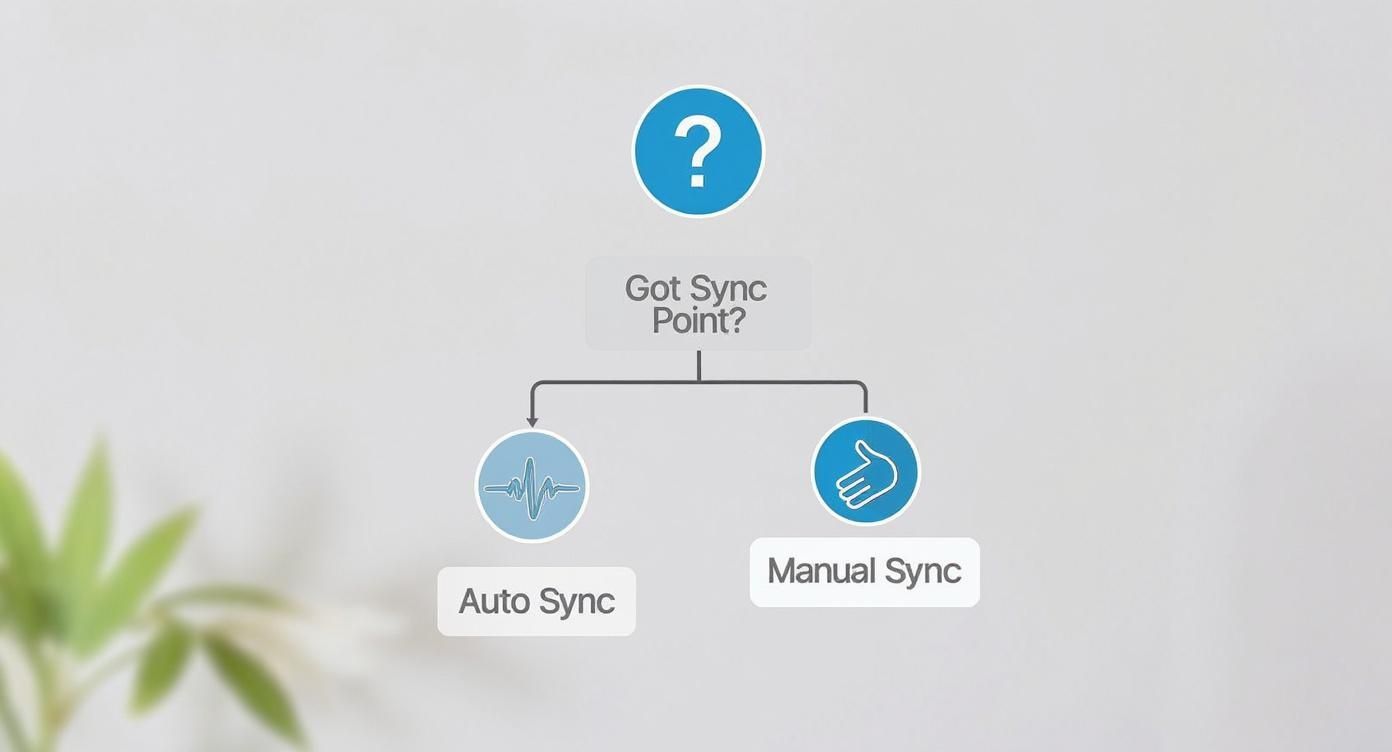

This flowchart gives a quick overview of your options when you hit a sync problem.

As you can see, if you have a clear reference point like a slate clap, automated tools are your friend. If not, manual syncing is always a reliable fallback.

Identifying the Causes of Audio Drift

So, why does drift happen? It's because your video and audio files are running at slightly different speeds. Even a tiny 0.1% difference becomes painfully obvious over a few minutes. Two main culprits are usually to blame.

- Variable Frame Rate (VFR): Many consumer cameras, and pretty much all smartphones, record with a variable frame rate. This helps save file space by adjusting the frame rate on the fly. The problem is your pro audio recorder captures sound at a rock-solid, constant rate. The two just don't play nice together, leading to drift.

- Mismatched Sample Rates: The other common cause is a disagreement in audio sample rates. Your camera might be recording its scratch audio at 44.1 kHz, while your external recorder is set to the professional video standard of 48 kHz. This difference in how many "snapshots" of sound are taken per second will slowly pull your audio files apart.

The Fix: Rate Stretching and Resampling

Once you know the cause, fixing it requires a bit of digital surgery in your editing software. The goal is simple: stretch or shrink your external audio clip until it perfectly matches the length of your camera's scratch audio.

Here’s the workflow I use to correct drift:

- Line Up the Start: Sync the beginning of your external audio and camera audio waveforms perfectly. Use a clap or another sharp sound as your guide.

- Jump to the End: Go to the very end of the clips. You’ll almost certainly see that the waveforms are now misaligned. That’s your drift.

- Grab the Rate Stretch Tool: Select your external audio clip. In a tool like Premiere Pro, this lets you drag the end of the clip to change its duration without messing up the pitch.

- Align the End: Drag the end of your external audio until its final waveform peak lines up perfectly with the matching peak on the camera's scratch audio.

This process, sometimes called "conforming," adjusts your audio's speed by a tiny, unnoticeable amount (often less than 1%). Once it's done, your audio should stay locked in sync for the entire clip.

For more complex audio issues, you might find that some of the best AI tools for content creators can help clean things up or even automate parts of the repair process.

Mismatched Frame Rates: A Different Beast

Don't confuse audio drift with a straightforward frame rate mismatch. A frame rate issue creates a consistent sync problem from the very first frame. If you try to sync a clip shot at 23.976 fps with another at 24 fps, they will never align without first conforming one to match the other.

Pro Tip: Before you start any project, check the properties of every single clip. Right-click on your files in your NLE and look at the frame rates and audio sample rates. Make sure they all match your project settings. This five-minute check can save you hours of frustration.

Broadcast standards have strict rules for this. For TV, audio and video sync must be within a window of +40 to -60 milliseconds. For feature films, the tolerance is even tighter, at just 22 milliseconds.

By diagnosing these problems early, you can turn a frustrating sync error into a quick fix, ensuring your final edit is tight, polished, and professional.

Advanced Workflows for Complex Projects

When you move beyond a single camera and one audio source, your editing workflow has to level up. Complex projects—think multi-camera interviews, podcasts, or videos with layered voiceovers—demand a more organized and powerful approach. Nailing these advanced techniques is what separates the editors who fly through their projects from those who get bogged down in a sea of disorganized clips.

Juggling footage from two, three, or even more cameras plus a separate professional audio recorder might seem like a nightmare. But modern editing software makes it surprisingly straightforward. The whole process centers on creating what's called a multi-camera sequence—a single, neat container that holds all your synced video and audio tracks in one place.

Taming the Multi-Camera Beast

Before you even dream of syncing, get organized. The very first step in any multi-cam edit is to create a dedicated bin inside your project. Drag all your related video clips and your master audio file into it. Trust me, this little bit of housekeeping will save you from a world of chaos down the road.

With everything in its place, the heavy lifting is mostly automated. In professional NLEs like Premiere Pro or DaVinci Resolve, you can just highlight all the video clips and your external audio file at once. A quick right-click usually brings up an option like "Create Multi-camera Source Sequence."

This is where the real magic happens. The software will ask you how you want to sync everything up. Nine times out of ten, you’ll want to choose audio. It will analyze the low-quality "scratch" audio from each camera and perfectly align it with the high-quality waveform from your dedicated audio recorder. As long as every camera was recording some sound, this method is incredibly fast and accurate.

Key Takeaway: Always, always record scratch audio on every single camera, even if it sounds terrible. That throwaway audio track is the essential roadmap your software uses to sync everything automatically. Without it, you're looking at manually syncing every single clip, which can add hours of tedious work to your edit.

Once the process finishes, you’ll have a brand-new multi-cam clip in your bin. Drag it onto your timeline, and you can switch between camera angles with a single keypress as the video plays, just like a live TV director. It's a game-changing way to edit interviews, live events, or panel discussions.

Syncing AI-Generated Voiceovers

A new challenge popping up more and more is syncing an AI-generated voiceover to a video. A human narrator naturally adjusts their pacing to match the visuals, but an AI voice is generated first. This means you have to manually time the video to fit the audio, which requires a slight shift in your editing mindset.

Instead of syncing audio to the video, you're now editing your video around the audio. The goal is to make the robotic precision of the AI voice feel natural and intentional.

Here are a few practical strategies to pull this off:

- Lock Your Visual Edit First: Get your visual edit as close to final as you can before you generate the voiceover. When you know the exact timing of your cuts, animations, and graphics, you have a perfect blueprint for the script.

- Generate in Small Batches: Don't create one giant, monolithic audio file. Generate your voiceover in smaller pieces—sentence by sentence or even paragraph by paragraph. This gives you the flexibility to add pauses and tweak the timing without having to re-generate the entire thing.

- Master the "J" and "L" Cut: These classic editing techniques are your absolute best friends here. A J-cut is when the audio from the next clip starts before the visuals change. An L-cut is the reverse, where the video cuts to a new shot but the audio from the previous one continues. Using them helps smooth out the transitions and makes the whole thing feel less jarring.

Fine-Tuning AI Voice Timing

Once you have your AI audio clips on the timeline, the real fine-tuning begins. Your go-to tools for this will be adding silence and adjusting the speed of individual clips.

If a visual needs a little more time to breathe on screen, don't hesitate to slice an audio clip and insert a second or two of silence between AI-generated sentences. These pauses make the delivery feel more deliberate and less like a machine gun.

For those moments where the timing is almost perfect, the rate stretch tool is your secret weapon. You can slightly speed up or slow down an audio clip—usually by less than 5-10%—to nail the timing without making the voice sound weird. This is perfect for aligning a specific word with an on-screen graphic or action. Mastering this final touch is what makes an AI voiceover feel completely seamless.

Syncing Audio for Social Media and Mobile Edits

When you're creating for TikTok, Instagram Reels, or YouTube Shorts, speed is everything. The short-form video world moves incredibly fast, and your workflow needs to keep up. You have to get content created, edited, and posted quickly, which means you can't afford to get bogged down with a complicated audio sync process.

Thankfully, the editing apps on our phones are built for exactly this kind of rapid-fire creation. They cram an impressive amount of power into interfaces that are actually a joy to use, letting you knock out high-quality videos without ever touching a computer.

Mobile Apps Built for Speed

Look, I love a good desktop NLE for bigger projects, but for a quick social clip, they often feel like overkill. Why waste time transferring files back and forth when you can do it all on your phone?

Two apps that have become absolute mainstays for this are CapCut and KineMaster. They do way more than just trim clips and have some surprisingly sophisticated features to make syncing audio painless.

- CapCut: This app is a powerhouse for trend-driven content. Its standout feature is the automatic beat-syncing tool. You can drop in a song, select your clips, and it will instantly cut them to the beat. It’s a game-changer for montages and music-focused videos.

- KineMaster: If you prefer a more traditional editing experience, KineMaster is for you. It gives you a multi-track timeline that feels a lot like a desktop editor, offering you that granular control to manually line up waveforms with precision.

These tools are all about helping you work fast without your content looking rushed.

A Fast Workflow for Social Content

The key to consistently pumping out content is to have a solid, repeatable system. This is especially true when you're creating engaging YouTube Shorts, where every second counts for keeping your audience hooked. Bad audio sync is an instant scroll.

Here’s a simple, real-world workflow to get your clips shot, synced, and posted without the headache:

- Prep Your Space: I know it's just a phone video, but a little prep makes a huge difference. Find a quiet spot. Less background noise means the app's sync features (and your own ears) will have a much easier time locking things in.

- Do the Clap: It might feel a little old-school, but giving a single, sharp clap on camera before you start is the best favor you can do for your future self. That spike on both the video and audio tracks is a perfect, unmistakable sync point that works every single time.

- Import and Align: Toss your clips into an app like CapCut. If you’re lining up a separate voiceover, that clap waveform will stick out like a sore thumb, making it easy to align manually. For music-driven edits, just let the beat-sync feature take over.

This little routine takes all the guesswork out of the equation and makes syncing just another quick step in your process.

My Personal Tip: I often just record my voiceovers directly inside the editing app after I've laid out my video clips. It allows me to react to the visuals as I see them, making the timing feel much more organic. Plus, it completely removes the need to sync anything later.

Timing On-Screen Text and Captions

In a world where most people scroll with their sound off, your on-screen text and captions are just as important as your audio. And just like audio, their timing is critical. Text that shows up too early kills a joke, and text that lags behind just looks sloppy.

All the good mobile editing apps have great text tools that let you dial in the timing perfectly.

- Align with Key Words: When you're using animated captions, make sure the highlighted word pops on screen the exact moment you say it.

- Pace for Reading: Don't just flash words on the screen. Give people enough time to actually read a caption before it disappears.

- Use Text as a Visual Beat: For music-heavy videos, time your text to appear and vanish on the beat of the song. It adds an extra layer of energy that really pulls the viewer in.

Once you nail these mobile workflows, you’ll be churning out perfectly synced, engaging content that's ready to grab—and hold—your audience's attention.

Common Audio Sync Questions Answered

Even when you do everything right, syncing audio and video can throw you a curveball. Every editor I know has run into bizarre sync issues, so if you're stuck, you're in good company. Let's tackle some of the most common problems you'll face.

Think of this as your personal troubleshooting guide. I'll break down the "why" behind these frustrating issues and give you practical fixes you can use right now.

Why Does My Audio Go Out of Sync Over Time?

Ah, the classic "audio drift" problem. This almost always boils down to a timing mismatch between your camera and your separate audio recorder. The number one culprit? Your camera, especially a smartphone, is likely recording with a variable frame rate (VFR) to save file space, while your audio recorder uses a rock-solid, constant clock.

Another common cause is a mismatch in audio sample rates. Maybe your camera's scratch audio is at 44.1 kHz, but your high-quality recorder is set to 48 kHz. To fix drift, your first step should be converting the video to a constant frame rate (CFR) with a free tool like HandBrake. If it’s a sample rate issue, you can usually just resample the audio right inside your editing software to match your project settings.

What Is the Easiest Way to Sync Multiple Cameras?

Honestly, the fastest and most reliable way is to let your editing software do the heavy lifting. Modern NLEs like Premiere Pro or DaVinci Resolve can handle this in just a few clicks.

All you have to do is select all your video clips and your separate audio file in your project bin. Right-click, and look for an option like "Create Multi-camera Source Sequence." When the dialog box pops up, make sure you tell it to sync using "Audio" as the reference point.

The software will then analyze the audio waveforms from every single clip and line them up perfectly. The key to making this work flawlessly is ensuring every camera recorded some scratch audio—it doesn't matter if it sounds terrible, the software just needs that waveform data to analyze.

Can I Sync Audio if I Forgot to Use a Clapperboard?

Yes, you can absolutely save it! A clapperboard or slate gives you that perfect audio and visual spike, but if you forgot it, you can find other natural sync points in your footage. It just takes a bit more manual work.

Look for moments that have a sharp, distinct sound that you can also see on screen:

- Someone setting a glass down on a table.

- The percussive sound of a door closing.

- Someone speaking "plosive" words like "pop" or "book" that create a clear spike in the waveform.

You'll need to zoom way in on your timeline and manually nudge the audio track until the peak of the waveform lines up exactly with the visual action. It requires some patience, but it’s a lifesaver.

How Do I Sync an AI-Generated Voiceover to Video?

Syncing an AI voiceover is less about technical alignment and more about creative timing. Since the audio is created completely separate from the video, you actually flip the process around—you edit your visuals to match the pacing of the voiceover.

First, get your video edit pretty close to final so the timing is locked in. Then, generate your AI voiceover in smaller chunks that correspond to the scenes in your video. When you drop the audio clips into your timeline, you can fine-tune the timing by adding or trimming pauses between sentences to match an on-screen graphic or a specific action. Most editors have a rate stretch tool that lets you slightly speed up or slow down a phrase without changing the pitch, giving you incredibly precise control.

Ready to skip the sync headaches and create stunning short-form videos in minutes? With ClipShort, you can generate scripts, choose lifelike AI voices, and produce perfectly timed, engaging content with animated captions and music—no filming or complex editing required. Try ClipShort for free and start creating viral videos today!